TLDR

The digital revolution that is unfolding before us will transform the world as we know it, in a way potentially even more far-reaching than the technological innovations of the 18th-century industrial revolution. However, while the industrial revolution conjures up images of heavily polluting steam engines and other inefficient industrial systems, these polluting images are not the first associated with the upcoming digital revolution. And perhaps these images should be coming to mind...

The potential environmental impact of the digital revolution becomes evident when looking at large energy consuming data centres which are in many ways the workhorse of the digital revolution. Data Centres are large organised collections of servers and networking devices which facilitate the storage, transfer and processing of information over the internet. They are a fundamental infrastructure component of the modern internet and many popular internet services such as search engines and YouTube would be impossible without them. The rapid digitisation of the world coupled with the trending adoption of SaaS and cloud based systems has further amplified the growth of largescale Data Centres.

In 2010 Data Centres accounted for 1.1 - 1.5% of all global energy consumption (a percentage which positively has remained constant into 2020) [2] [4]. However, this is still a huge amount of energy, which is forecast to grow to as high as 13% of global energy consumption by 2030 according to a report by Andres Andrae [2]. Therefore, the challenge is clear, we must find ways to minimise energy consumption. This is where AI comes in.

In 2016, researchers at DeepMind confronted this issue by developing a Machine Learning framework to make Google's (and in theory any other's) large-scale Data Centres more energy efficient. The potential contribution of this innovation to global efforts against climate change cannot be understated, especially considering the amount of processing in Data Centres has increased by about 550% between 2010 and 2018 alone [5].

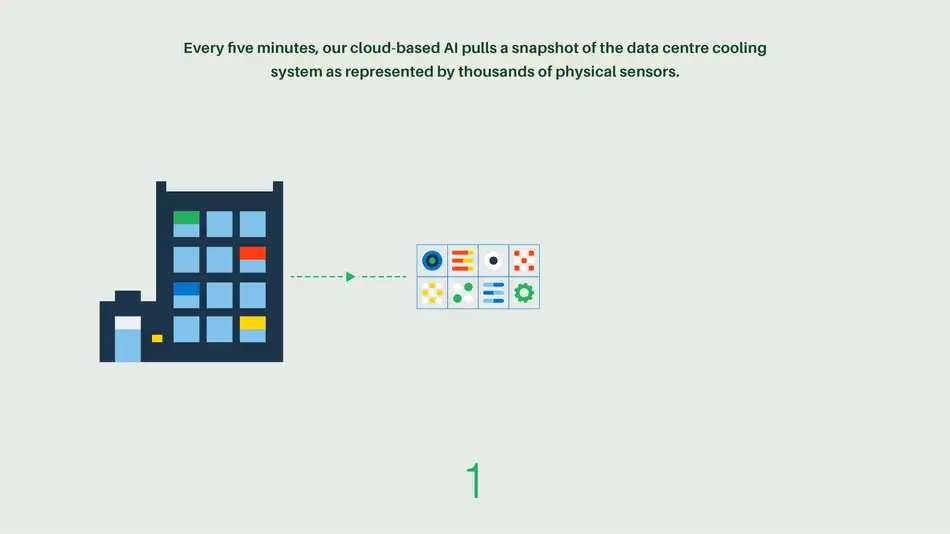

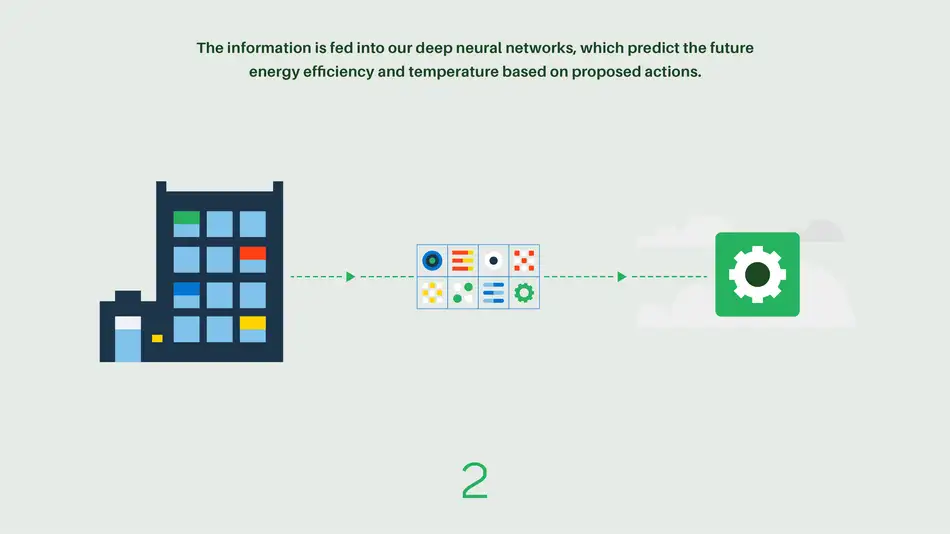

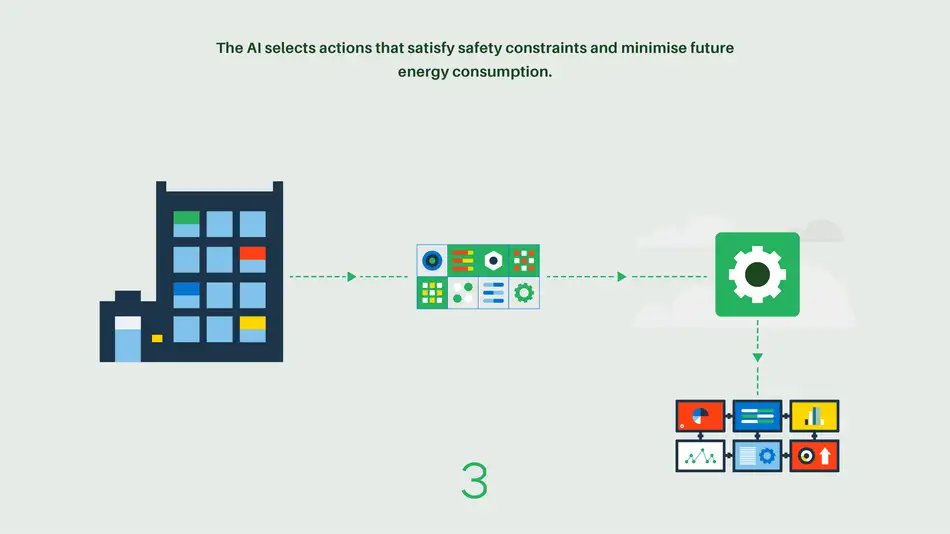

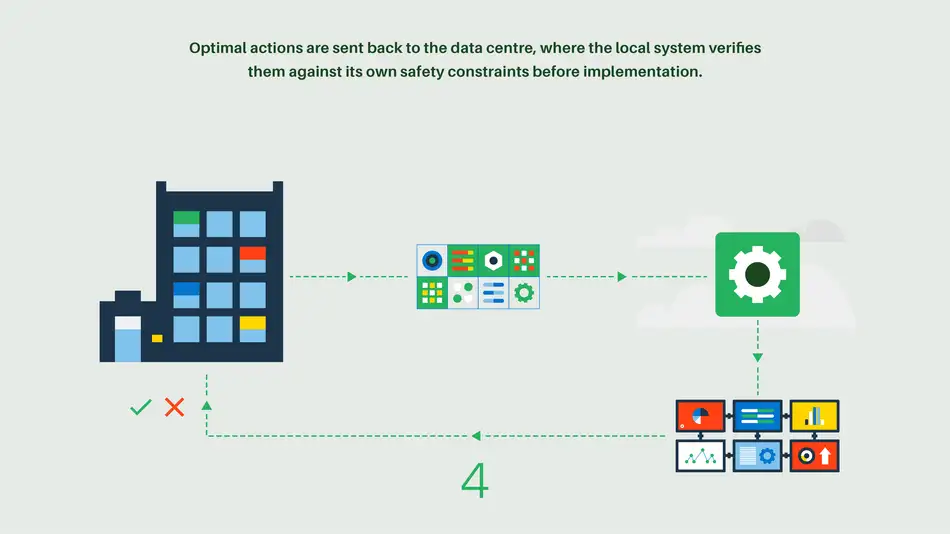

DeepMind's framework collects data from a complex setup of cooling sensors and electrical equipment and then models potential operating scenarios. Following this modelling process, an ideal set of actions are recommended and implemented to reduce future energy consumption. Due to the complexity of the interactions and feedback loops between the myriad of variables, it is extremely difficult to model and predict operating efficiency using standard formulae without conceding large errors and this is where the machine learning framework gains an advantage [1].

The framework uses neural networks to output a prediction for the expected power efficiency of the data centre. The approach does not require the complex mathematical relationships between variables to be predefined as neural networks operate by automatically converging on the best-fit mathematical model for the given training data. A research paper by DeepMind details that the network consisted of 5 hidden layers, with each layer having 50 nodes [1]. The framework also used 2 years of monitoring data collected from google Data Centres. Feature selection is a huge part of any machine learning task and here the researchers used 19 normalized input variables (limited to those that fundamentally affected the performance of the Data Centre) and one normalized output variable. The selected output metric, Power usage efficiency (PUE), is a ratio of Total Facility Energy Usage / IT Equipment Energy Usage. The ideal PUE value of 1 would mean that none of the energy consumed by a Data Centre Facility goes towards cooling or other non-IT-specific uses.

The research effort produced a robust and reliable way to model and predict the operating efficiency of large Data Centres and allows Data Centre operators to simulate various ways of configuring operating conditions without the cost of having to implement physical experiments.

After implementing the machine learning system on google Data Centres the team were able to predict PUE with an error of 0.4%, at a value PUE of 1.1[1]. There was a 40% reduction in the amount of energy used for cooling and a 15% reduction in the overall PUE for the Data Centre site which was used in this research [1]. This successful result is good news because it also means that it is possible for companies operating Data Centres to reduce their operating costs whilst simultaneously reducing their carbon contributions. This is important given that there is oftentimes a trade-off between the two; one which all too often skews towards the economic incentives against the environmental impact.

Although this framework provided a general way to model Data Centre operations, each Data Centre has unique constraints and so the results achieved may not generalise as easily for Data Centres in different environments or with different architectures[3]. Other areas which the IT-services industry can also consider to reduce its environmental impact include: Reducing semi-conductor manufacturing energy usage, reducing raw material wastage and also sourcing more energy from renewable sources. Perhaps AI can be leveraged to solve some of these challenges as well.

References

[1]Jim GAO(2016), Machine Learning Applications for Data Centre Optimisation. Google DeepMind

[2] Nature (2018), “How to stop data centres from gobbling up the world’s electricity”, https://www.nature.com/articles/d41586-018-06610-y

[3]https://deepmind.com/blog/article/deepmind-ai-reduces-google-data-centre-cooling-bill-40

[4] Koomey, Jonathan (2011). “Growth In Data Center Electricity Use 2005 To 2010.” A Report By Analytical Press

[5] https://blog.google/outreach-initiatives/sustainability/data-centers-energy-efficient/

[6]https://www.42u.com/pue-metric.htm